GSPs, and EC2

I’ve been running servers in some capacity or another since 2011, and with such a track record you inevitably move from one server, host, and company to another.

Originally I was simply renting game servers from a generic GSP, then a shared web-hosting service from another company.

However eventually the demand became too great, and in 2011 I switched to EC2-instances for the vast majority of my services.

Aside from the general exorbitant pricing of EC2, and slightly under-powered single core performance, it served me very well until demand had slightly dropped, and it was no longer economically viable to host on EC2.

Dedicated Woes

So in 2013 I moved to a dedicated host in Dallas, TX, where for a reasonable, if slightly high fee, I rented a 2-Core Nehalem server, running Windows, with a SSD C:/ drive, and two 500GB HDDs in RAID.

This machine served me well enough, it hosted Mumble, a myriad of SRCDS servers, and our XMPP server.

However it had some very noteworthy drawbacks.

Being Windows based security was always a concern, and often times Linux-native packages were impossible to run.

There was also the issue of networking. This host had consistent packet loss on the daily, up to 8% in certain windows.

Considering this ran Mumble (VoIP) and game servers, this was a major irritation.

In late 2016 the server mainboard suffered a critical failure, and I was transitioned to a Sandy-Bridge based 4-core server. By this point I was starting to get some actual leg work from my servers, and 4-core of SB performance wasn’t exactly top of the line, and anything above this was exorbitantly expensive.

Coupled with the networking problems, and I started planning for the future.

The future is Zen

I always knew I wanted to own my server hardware, but a lot of things needed to line up. Both pricing (and Datacenter availability) and hardware.

That’s why in February when AMD blew the lid off of Zen, their 8-core beast, I knew it was time.

With the hardware question answered, it was time to look into data-centers. I was lucky in that I live in a area with a good number of these, so it took a couple months of shopping around, then contract negotiation, and I had a home for the server I was going to build .

Specs of salt

It was now early 2018, Zen+ had just shipped, and I was getting my build together. Finding a proper 2U Chassis, PSU, and components to fit into a nice compact and powerful server.

The final build ended up looking like this:

- AMD Ryzen 7 1700X

- ASRock B350M Pro4

- Corsair Vengeance LPX 32GiB (2x16GIB)

- Samsung 860 Pro 256GB

- Seasonic 400W 2U

Which is a significant chunk of performance to stick in a 2U, especially for the price.

Say hello…

NACL on the workbench

NACL on the workbench

Racked and Loaded

With the hardware for NACL in order, and my contract signed, it was time to move in and get migrated.

Moving from Windows to Linux meant virtually everything was brand new, and it was mostly copying databases. Unfortunately a lot of old SRCDS mods don’t support Linux, so I’m unable to host Obsidian Conflict, and The Hidden.

The new data-center is a significant step up from the old dedicated host, with reliability far exceeding it.

The amount of control, I get is liberating, if I want to stick a new CPU, or two different boxes in the rack-space, I am free to do so.

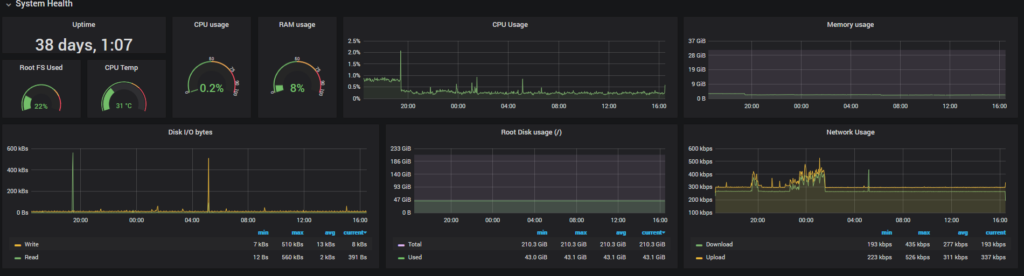

Monitoring

Another benefit of Linux, is the vast options for monitoring the health of the server. And I’ve taken great advantage of this, tracking both system and network performance.

The bright future

That wraps up most of the big ideas so far, but I’m excited to grow my little server collection in the future. Both upgrading NACL, and hopefully renting additional space, and adding more servers.

Only time will tell where this venture leads.